2.19.5.3. Limit indexing frequency

Indexing bots perform frequent requests to site pages to update information and store it in their databases for subsequent display in search engines. The work of these bots is useful, but too high a scanning frequency can cause unnecessary load.

Attention!

Starting January 8, 2024, Google Bot will no longer have a separate indexing frequency setting and will focus exclusively on the response speed of site pages and the codes returned. For more details, see Google Developers Blog.Limiting the indexing frequency will result in a lower site ranking or its removal from the search index, so the correct solution is not to apply limitations, but to optimize the site itself. The site developer needs to analyze its performance, find bottlenecks, and solve them to increase the response speed.

Possible solutions could be:

- Connecting OPcache.

- Switch to a plan with more available resources if the current plan's resources are insufficient (see Server resources consumption charts).

However, if the site's performance is currently more important than its position in the index, you can specifically slow down the response speed for Google Bot or return response codes other than positive ones, such as 500, using the site's itself.

Bing

- Log in or register in Bing Webmaster.

- Add and confirm the domain (if not already confirmed).

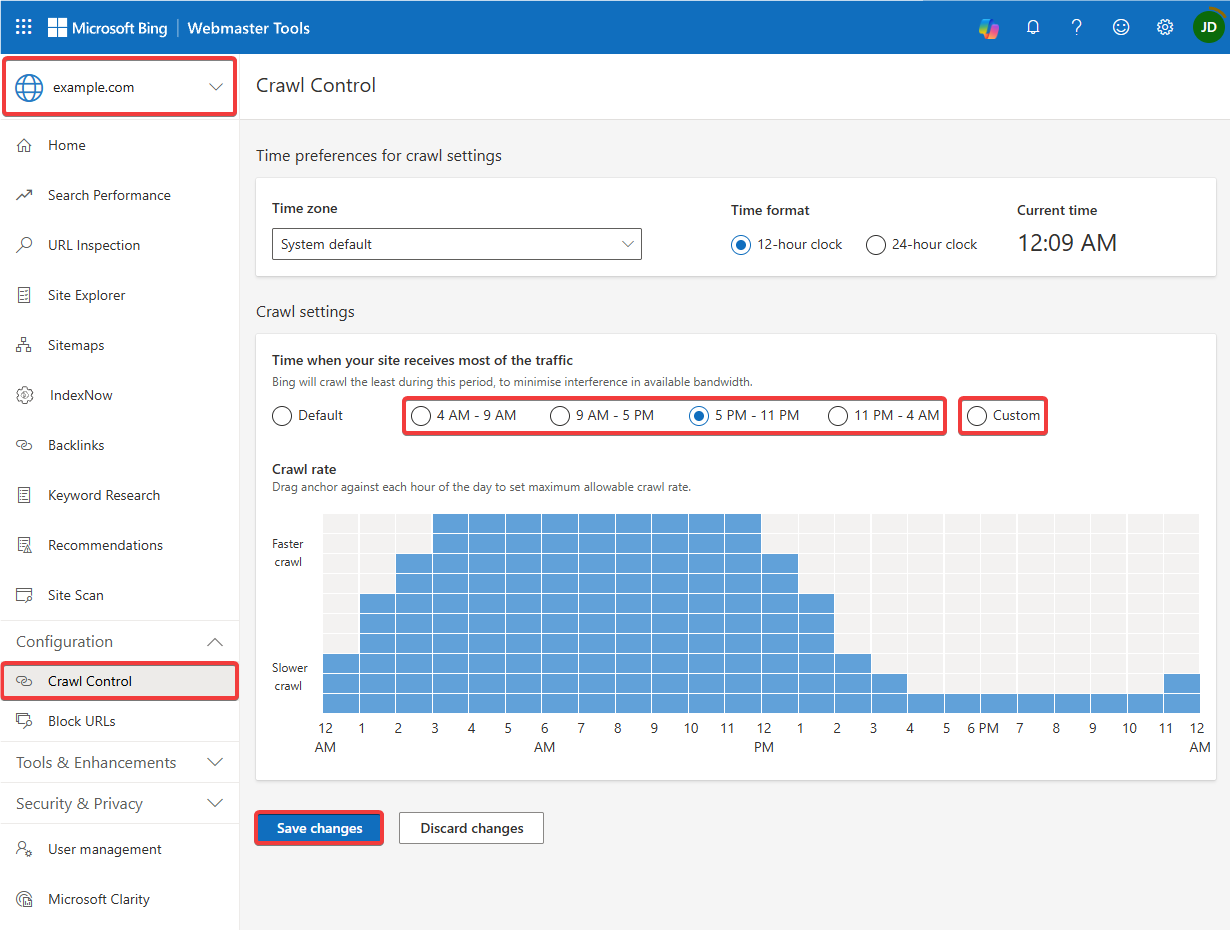

- Select your site, go to the "Configuration → Crawl Control" section, select a ready-made site visit frequency template or select "Custom" and specify your indexing frequency by hours, then save your changes:

Crawl-delay

Attention!

Some bots may completely ignore the Crawl-delay directive.Usually, indexing bots follow the rules in the robots.txt file, where you can set the indexing interval.

In site root directory, create or edit the existing robots.txt file and specify the Crawl-delay directive in it directive (instead of *, you can specify the User-Agent for which the indexing frequency should be limited, instead of 3 — the number of seconds between requests from the bot):

User-Agent: *

Disallow:

Crawl-delay: 3